Market research trends in the tech industry: What's changing in 2026

Carolin Bolz · 30.04.2026 · 16min read

Content

Speed has always been a pressure point for market researchers. But in 2026, the conversation has shifted. It's no longer just about doing things faster – it's about whether doing things faster actually helps you make better decisions.

That tension – efficiency vs. effectiveness – was at the heart of Appinio's latest webinar, What's Hot in Market Research 2026. Hosted by Louise Leitsch (VP Research) and Fiete Voß (Head of Product Strategy), the session drew an engaged audience of researchers eager to understand where the industry is heading and what it means for their day-to-day work.

In this article we’ll distill the key themes, with a particular lens on what they mean for researchers working in or alongside the tech industry. We’ll dive into where product cycles are compressed, decisions need to happen fast, and the pressure to demonstrate research ROI has never been higher.

So what are the hottest market research trends for 2026? Read on to find out…

The core tension: fast isn't enough on its own

If there's one idea that ran through the entire webinar, it's this: AI can make your research process dramatically faster, but if you're asking the wrong questions to the wrong people using the wrong methods, you'll just get wrong answers more quickly.

When a team relies on poor methods, all the efficiency gains in the world produce results you can't actually use. That's not a criticism of AI – it's a reminder that AI is an accelerator, not a replacement for research rigour.

This is especially relevant for trends in the tech industry. When you're launching a feature update, running a brand tracking study across multiple markets, or testing messaging for a new product line, the speed-to-insight advantage is real and valuable. But if those insights are built on shaky foundations – biased samples, vague questions, siloed data – no amount of AI-generated analysis will fix that.

The solution Appinio is pursuing isn't just building faster tools. It's building smarter ones, and pairing them with research expertise that keeps the output honest.

AI across research: what's live, what's coming

Here’s how we think AI can be best used at each research stage.

Discovery: Synthetic personas and smarter search

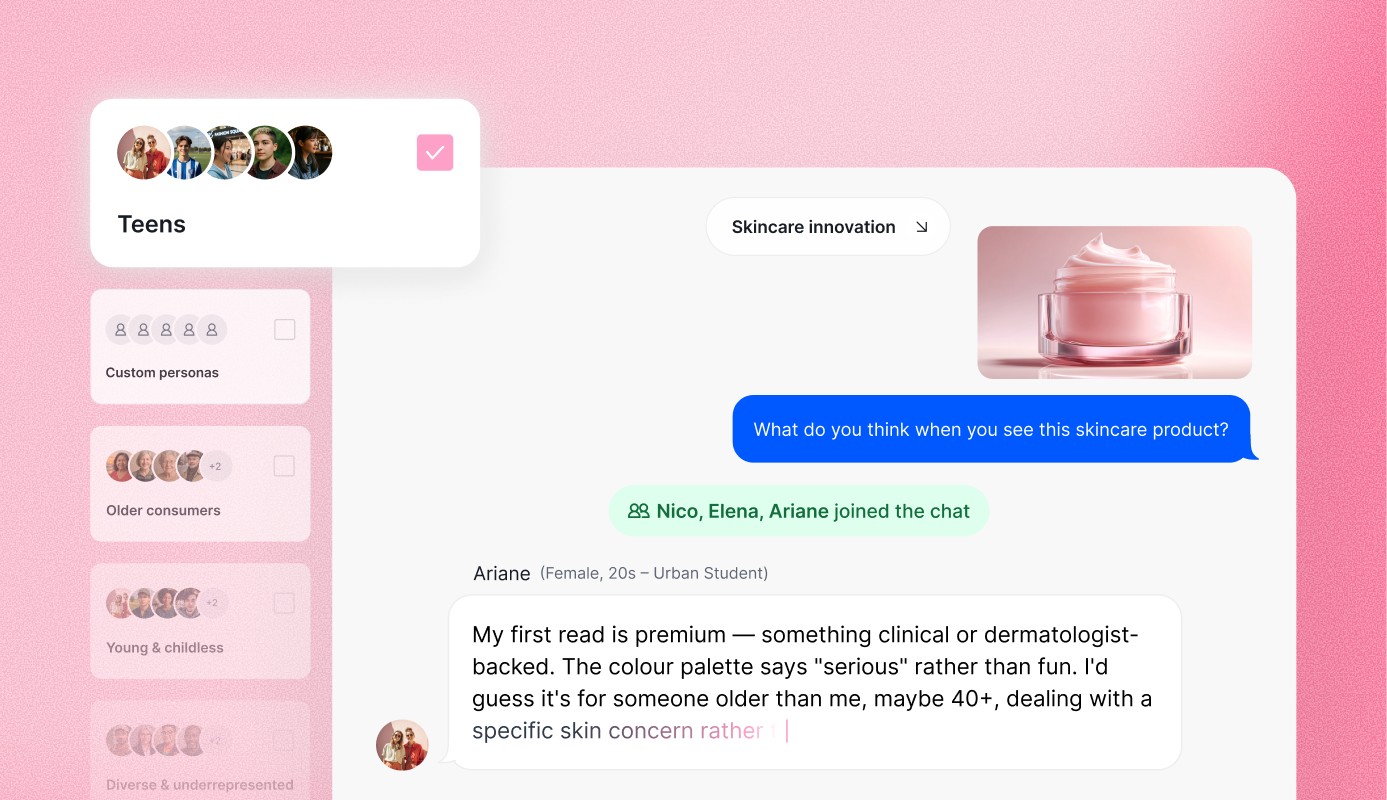

The biggest market research trend announcement was Appinio Conversations – a tool that lets you interact with scientifically validated synthetic AI personas to get rapid qualitative feedback. Within seconds, you can run a group chat with up to ten personas built on three science-backed dimensions: social demographics, past behaviour, and psychographics (using a framework developed in partnership with CRONBACH).

For tech companies, this is genuinely useful for early-stage exploration. Think: you have twelve potential product names or feature descriptors, but only budget to properly test three. Conversations lets you run a fast, iterative screen before committing to a full quant study. It's not a replacement for real respondents but as a hypothesis-building tool, it changes what's possible when time and budget are tight.

Another new feature is an AI-powered insight search – query in plain language, get the most relevant past studies back instantly, regardless of how they were originally filed. Get more info on our latest features.

Research design trends: Fewer hours spent programming surveys

The sentiment surrounding study construction in 2026 is a move away from manual friction.

Questionnaire programming is historically a tedious, error-prone necessity that AI is now transforming into a streamlined, automated process. By shifting the researcher's role from "builder" to "refiner," these tools eliminate the "blank page" problem and allow teams to focus on strategic objectives rather than technical execution.

Key research design and programming features:

- Instant Automated Programming: Users can drag and drop Word, PDF, or Excel files to have them programmed automatically with full logic, randomization, and filtering in place.

- AI Draft Creation: Generates a complete first questionnaire draft from scratch based on specific research goals.

- Intelligent Wording & QA: Provides automated suggestions to clarify question wording, generates context-specific answer options, and checks for overall structural coherence.

- Collaborative Implementation: Recognizes feedback or comments from colleagues on the platform and suggests one-click fixes to implement those corrections.

- Global Scalability: Offers instant translation into over 40 languages while keeping all complex survey branching and logic fully intact.

Fieldwork: The data quality battle

If there was one big theme for market research trends in the tech industry it was this: Data quality!

More and more brands have been approaching Appinio because they're unhappy with the quality they're getting from their current vendors. And this is a trend that's been amplified by the rise of AI bots completing surveys.

Appinio's response is what we call a Data Shield. It’s a set of layered protections that includes AI-generated consistency checks tailored to each specific project, plus a machine learning cleaner trained on thousands of examples of deleted responses (gibberish answers, suspiciously fast completion times, implausible patterns). Human oversight remains part of the process; the AI flags, humans verify.

The other fieldwork innovation worth noting is AI-driven probing questions for open-ended responses. If a respondent answers "colour" when asked what they like about a product design, the platform follows up with a targeted question to get more depth. The reported outcome: four times as many detailed answers, with no change in dropout rate, and 15% fewer deleted participants.

For tech companies that regularly use open-ended questions to capture product feedback or user sentiment, this is a meaningful upgrade without adding any burden on the respondent.

Analysis: Open-ends at the speed of closed questions

Open-ended analysis has historically been one of the biggest time sinks in research – read everything, code it, group by topic, assess sentiment. AI has changed that equation substantially.

Appinio already has sentiment recognition live, and four further capabilities are coming across 2026: topic clustering, topic correlation, per-topic word frequency analysis, and per-topic sentiment. The end goal is to process open-ended questions at the same speed as closed questions – and to make the results just as immediately usable.

Storytelling: From raw results to shareable narrative

The executive summary feature – already live and heavily used – generates a complete project summary, overall conclusions, and key recommendations within seconds.

And there’s more to come. AI video summaries bear much potential to guide stakeholders through their results.

This last one is more than a nice-to-have for tech companies specifically. Getting internal stakeholders – product managers, engineering leads, CMOs – to actually engage with research findings is a constant challenge. A short, clear video summary that explains what the data says and what to do about it is much easier to share across Slack than a 40-slide deck.

The effectiveness argument: why faster isn't enough

But how does research get not only faster but also more meaningful?

The answer: effectiveness – using methods that genuinely predict real-world outcomes like brand growth, market share, or product success – had a moment about a decade ago when Byron Sharp's How Brands Grow landed, and then gradually faded from active discussion. In 2025 it came back. Clients asking about mental availability and category entry points quadrupled month-over-month at Appinio last year.

The stakes are higher now. AI efficiency without a strong methodological foundation just means you're producing more of the wrong thing, faster. Researchers who understand this and build their programmes accordingly will be the ones who can demonstrate real business impact.

Two strategic frameworks worth knowing

Two strategic foundations for any research that are recommended by our experts:

- Category entry points and Mental availability: Rooted in Byron Sharp's work, this approach identifies the triggers that bring consumers into a category, and measures how strongly your brand is linked to those triggers. The KPIs that come out of this kind of research have strong correlations with brand growth – and they're applicable to B2B and consumer tech alike. If you're a neo bank, what situations or problems trigger someone to seek out your category? And is your brand the one they think of first?

- Psychographic motive and attitude profiles: Understanding whether your buyers are driven by status, achievement, or affiliation – and whether your messaging should be emotional, rational, or pragmatic – gives you a durable lens for evaluating everything else. When Appinio has used this framework to enrich ad testing (going beyond likability and uniqueness to also measure whether a category entry point is being communicated and whether it resonates with the right motivations), the predictive value of the test increases by roughly three to four times.

These aren't new market research trends. But the argument is that AI makes running ad-hoc research so much easier that teams risk doing more of it without ever building the strategic foundation that makes each individual test meaningful. The insight-rich companies of 2026 will be the ones that use their efficiency gains to go deeper, not just wider.

Breaking research silos

One of the biggest market research trends is the breaking of a pattern especially common in larger organisations that run research projects.

One team runs brand tracking. A second team does ad testing. The creative agency runs campaign tracking. The product team runs its own qualitative concept testing. Everyone is client-centric. Nobody talks to each other. The brand team's insights never reach the product team. The agency's campaign data never informs the strategy team.

This is a structural problem that's especially pronounced in tech companies, where growth has often outpaced coordination, teams operate in sprints and pods with their own data cultures, and research is commissioned reactively rather than strategically.

The solution is moving from isolated research projects towards a connected research programme where each study builds on the last, and every data point connects to the next. AI can help make the transition smoother (shared platforms, consistent frameworks, faster analysis), but the desire to break silos has to come from the researchers themselves.

The practical implication: if you're in a research function right now, this is the argument you need to be making internally. Not "look at this cool AI feature" – but "Here's how we can structure our research so that every project we run makes the next one smarter."

The evolving role of the researcher in times of AI

The question that came up most often in the webinar chat was: what does this mean for my job?

The honest answer: the work is shifting, not disappearing. Researchers will spend less time on questionnaire programming, data cleaning and manual open-end coding. They'll spend more time on the things that require human judgement: understanding client needs, designing effective research programmes, communicating findings to stakeholders who have competing priorities and short attention spans, and managing the change process so that insights actually get used.

That last point deserves emphasis. Running a study might take a week. Getting an organisation to actually change its behaviour based on the findings can take a year. That's where human skill – empathy, persuasion, relationship-building, navigating politics – is irreplaceable. AI doesn't do change management.

The old model was T-shaped: deep expertise in one methodology, broad awareness of others. The new model she called "comb-shaped" – multiple areas of genuine depth, combined with the breadth to speak credibly across different business functions. “You need to understand what the CMO needs, what the Product VP needs, what the brand team is trying to accomplish, and be able to translate research insights into the language of each.”

For researchers in tech, this is already becoming a prerequisite rather than a nice-to-have. The most valuable research professionals in fast-moving tech companies are the ones who can hold a conversation with an engineering lead about feature prioritisation in the morning, present brand health findings to the CMO in the afternoon, and challenge a product team's assumptions based on consumer data – all without losing credibility.

How to use the time saved by AI: invest it in learning. Specifically, learning about complex methodologies you've never had bandwidth to go deep on, and building the effectiveness capabilities – mental availability, psychographics, marketing science – that make research genuinely predictive rather than just descriptive.

Synthetic data: the longer game

The last research trend topic – synthetic quantitative data – generates a lot of noise and little clarity.

The quality of synthetic data depends entirely on the quality of the training data. If you have a rich historical dataset for a core product in a core market, you can train a synthetic panel and simulate responses at scale – useful for ad testing and concept testing where the pattern of behaviour is well-established. If you're launching something genuinely new in a market you've never studied before, you still need real humans.

The result in practice will almost always be a blend: sometimes 90% synthetic and 10% human, sometimes the reverse. The ratio depends on what you're testing and what precedent exists in your data.

Today, the research flow is linear: design questionnaire > collect data > analyse. The data collection step sits in the middle and forces you to commit to your questions upfront. If you realise after fieldwork that you forgot to ask something, you're stuck.

In a world with mature synthetic data, that linearity breaks down. You upload your assets, get a summary, refine your questions, get updated results instantly – it becomes a circular, iterative loop rather than a one-shot process. The same AI features being built today (AI-generated questionnaire drafts, AI analysis, AI executive summaries) form the foundation for that future state.

It's not here yet. But the groundwork is being laid.

What these market research trends mean specifically for tech industry researchers

Most of what we discussed in the webinar applies broadly across industries. But a few threads are worth pulling on specifically for those working in or with tech companies:

- Release cycles won't slow down for research. The tools we’re building at Appinio are designed for a world where you need insight on a two-week timeline, not a two-month one. AI-generated questionnaire drafts, automated fielding, and instant executive summaries are all aligned with the velocity that product teams operate at. The opportunity for researchers is to be a real part of the sprint cycle rather than a gate that slows it down.

- Data quality is a competitive advantage. With AI-generated survey responses on the rise, companies that can guarantee clean, human-validated data have something real to offer. For tech companies running market sizing studies, pricing research, or feature prioritisation surveys, the quality of the underlying data is the thing that makes or breaks the decision. This is worth scrutinising in your vendor relationships.

- Global by default, not by exception. Most tech companies are either already international or plan to be. Instant multilingual fieldwork and cross-border result translation matter more in this context than in most others. The ability to run a study across six markets simultaneously, with consistent structure and quality, is a genuine operational unlock.

- Stakeholder engagement is the last hurdle. Tech companies are full of smart people with opinions about what customers want. Getting them to trust and act on research findings is often harder than running the research itself. AI video summaries and automated boards that tell a clear story are tools that help close that gap.

- Mental availability matters even in B2B. The category entry points framework is not just for consumer brands. For SaaS and enterprise tech, understanding what business problems trigger procurement teams to start looking for a solution in your category – and whether your brand comes to mind in those moments – is foundational strategic intelligence. It's underused in B2B research, and that's an opportunity.

Where things stand

The picture that emerged from our What’s Hot In Market Research webinar is one of genuine, compounding change. Not disruption for its own sake, but a steady integration of AI across the parts of the research process where it creates the most value.

The researchers who will do best in this environment are the ones who treat AI as a tool for getting to the interesting part faster — not as an excuse to skip the hard thinking about what they're actually trying to learn, and why.

Louise's line from the webinar struck a chord: “AI is really flashy. It can do a lot of great things. But sometimes it makes sense to go back, read the boring books, read the scientific literature – to re-ground in what really matters and what's really effective.”

That's not a rejection of new tools. It's a reminder of what they're in service of.

If you want to go deeper on any of the topics covered here – including a live demo of Appinio Conversations, the AI questionnaire features, and the product roadmap for 2026 – the full recording of "What's Hot in Market Research 2026" is available to watch now.

Get facts and figures 🧠

Want to see more data insights? Our reports are just the right thing for you!